“They don’t care about it,” said Vinod Menon, the noted Stanford neuroscientist who’s deeply engaged in research on music and the brain. He was sitting in a studio at Stanford’s Center for Computer Research into Music and Acoustics, where he’d just given a talk at the university’s 4th Annual Music and the Brain Symposium, hypothesizing about why our hairy cousins get no kick from Chopin. He drew on his ongoing research into the way the brain processes music and other aural information, including language, as well as which neural systems are involved and how they link up.

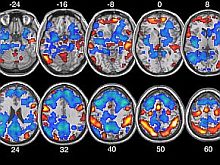

Menon and his team are also probing the reward pathways that music lights up in most people but that don’t seem to fire in those with depression and anhedonia. They’re putting depressed and cheery people into an fMRI tube — the f stands for functional, the MRI for magnetic resonance imaging — playing them Mozart through headphones and mapping their brain activity with images generated every two seconds. All kinds of new studies about how the brain deals with music, and how music affects the brain, are going on at Stanford, Harvard, and other institutions, furthering knowledge of neural systems and opening the door to understanding things like autism and attention deficit disorder.

Chatting between talks by brain and music mavens about improvisation — the general theme of this year’s high-powered powwow — Menon spoke about the paper he’d delivered, titled “Saliency, Attention, and Synchronization of Brain Responses During Music Listening, or Why Monkeys Don’t Listen to Music.”

Mapping “Salient Events”

There’s a core system involved in detecting “salient events,” which include stimuli like “the complex sequences of sounds we call music,” he explained. Whatever the brain reads as mattering or worthy of attention triggers a change in heart-rate or other physical response. “The behavioral evidence is that the monkeys don’t detect this and don’t pay much attention. They don’t have the same neuro-anatomy. The circuits and the neurons that facilitate this process are different,” said the gentle-voiced professor.

Illustration by Thomas Fuchs

He has based his new hypothesis on various data: his work mapping the brain systems that set up expectation, respond to change, and create memory; a 2007 monkey-and-music study, by Harvard evolutionary psychologist Marc Hauser and MIT perceptual scientist Josh McDermott, which showed that marmoset monkeys prefer a silent space to one with music; and other data about ape-brain volume and anatomy, including a study by California Institute of Technology researchers looking at MRI scans of humans and monkeys.

In monkey brains, Menon went on, some of the regions containing the saliency detection network “are either smaller or configured differently, and have different densities of key neurons, which play a role in signaling within this network and between networks.” One is the von Economo neuron, a hot-wire cell that may play a role in the fast-acting saliency network. The apes “have a lot fewer of them,” said the neuroscientist, who put up a graphic during his PowerPoint presentation showing that monkeys have 6,950 von Economo neurons in their “core regions,” compared to 193,000 in humans. No wonder monkeys can’t get into Thelonious Monk.

Menon published a 2007 study with This Is Your Brain on Music author Daniel Levitin, Stanford music professor Jonathan Berger, and others, suggesting how neurological systems create expectation and respond to changes in music during brief moments of silence. He’s currently giving a prepublication polish to a Stanford study revealing that the syntax of spoken language and the syntax of music are processed by the same brain structures and neural resources. The study was spearheaded by Menon’s advisee Daniel Abrams, a Stanford postdoctoral fellow who gave a Music and the Brain Symposium talk on “Cognitive-sensory interaction in the neural encoding of music and speech.”

Abrams and his colleagues studied brain scans of people listening to Beethoven’s Fifth Symphony, Franklin D. Roosevelt’s “fireside chats,” and other famous pieces of music and oratory. They altered and manipulated the spoken and musical structures to see which parts of the brain were sensitive to such manipulation.

“Lizard Brain” Likes It, Too

“We found there is a broad network, in the temporal and frontal lobes and also extended into the brain stem, that is sensitive to syntax in music and speech,” Abrams said during an interview. This is news, he notes, for a few reasons. “First of all, because the brain stem is involved. It’s considered lizard brain, a low-level part of the brain. It was previously not thought to play any significant role in music or language processing.”The research, Abrams went on, also “tells us that the brain doesn’t function like a ladder, like a hierarchy. It’s not one step, then another step, then another. I think the brain functions like a big network; things are connected in both directions. And that music and speech processing are so biologically important in the network, the entire brain — including the brain stem — becomes involved in the processing.”

This kind of research, he added, “has implications with respect to certain clinical populations. It’s been shown that many reading-impaired or dyslexic individuals have abnormal and poor auditory function. And it’s been shown that musical training engages the very same structures that are important for speech. Music and speech share similar brain structures. The idea is that we might be able to help reading-impaired people with musical training.”

In addition to their work using music to explore reward pathways, Menon and his inquisitive crew are also hoping to start a series of studies dealing with music, emotion, and the brain. Other current work that intrigues Menon is the research that Gottfried Schlaug is doing at Harvard on how the brains of kids learning to play musical instruments change both anatomically and functionally.

“His research is showing that [in] children who start training at a very early age, their brain, their anatomy, changes.” Those findings will lead to other studies to see whether musical training “has transfer effects on attention in other domains.”

Music and Cognition

Working with Dartmouth colleague Daniel Ansari, Berkowitz mapped the brain activity of musicians who were given a little five-note keypad and five simple melodic patterns and then cued to play the pitches or patterns however they wished for 40 seconds. They could play to a metronome click or set their own tempo. The researchers saw three brain areas involved: the dorsal premotor cortex, engaged in choosing and generating motor patterns; the anterior cingulated cortex, involved in decision making; and the inferior frontal gyrus, “an area known to be involved in language production and language perception,” said Berkowitz, a rosy-cheeked man with a full brown beard and long ponytail. “It’s known also to be involved in music perception. And we’ve now shown that it’s involved in music production, as well.”

Asked about the larger implications and possible application of this kind of research, Berkowitz mentioned a study Schlaug is doing with stroke victims who have lost the ability to speak but not to sing. Using something called melodic intonation therapy, experts in the field “have shown that you can train these people, through singing, to regain some language function.”

Some researchers even claim they’ve cooked up a musical formula that can treat people with depression, anxiety, and insomnia. Vera Brandes, director of research in music and medicine at Salzburg’s Paracelsus Private Medical University, has cofounded a company that plans to sell musical medication by prescription.

“I am the first musical pharmacologist,” Brandes told The New York Times in March. Sanoson, a company she helped start, which customizes music systems for hospitals and medical offices, plans to start marketing Brandes’ treatment next year. After being diagnosed, patients are given a special listening device and headset and prescribed a set of original music composed to treat their particular malady. The secret ingredients are being trademarked.

Music Therapy: Proceed With Caution

Nobody doubts that music can soothe “the savage breast” and alter mood. Berger, a composer and researcher who directs Stanford’s Institute for Creativity in the Arts, which organized the symposium, was interested to read about Brandes in the Times. Asked to comment on her claim to have bottled magic music that can cure psychic ills, he was diplomatically skeptical.“I think there’s enormous therapeutic potential in understanding the neuroscience of music. But it’s a long, careful road we need to take to get there,” said Berger, who’s involved in a number of research and clinical projects with his students. One uses electroencephalograms (EEGs) to study how the brain creates musical expectations and reacts to the violation of those expectations. He dreams of finding a way to measure surprise: “a surprise meter,” he said with a laugh. A project with a Stanford oncologist uses music to help nervous patients steady their breathing when they’re in an MRI tube.

“The ‘Mozart effect’ was simply bad science, bogus science,” Berger said. “And a whole industry grew out of it, and remains. The negative effect it had on research was pretty profound. So I hesitate to jump to industrial-strength conclusions before you have some real, solid, scientific data. ... I think it’s premature to say there are panaceas.”

It’s been shown that brain waves elicited by particular low frequencies correlate with altered states of consciousness, intense focus, or sleep inducement, says Berger, who can imagine a cheap device that “would simply put out music, or musical rhythms, at particular rates that, when used effectively, would have a therapeutic effect, whether it’s inducing focus or sleep. I see that as one possibility. But are we there yet? Barring FDA approval and all of that, we’re miles away from doing it scientifically.”